Motion Capture Unreal Engine Pipeline, Blog#20

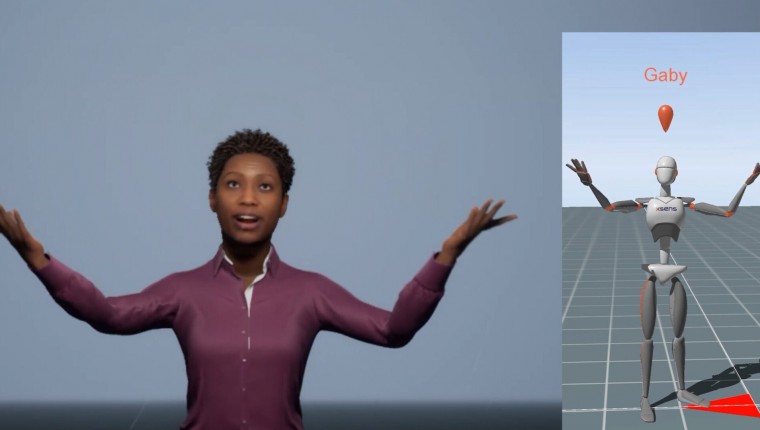

For the past week, I have been testing out different methods of bringing in motion capture data from MVN Animate into Unreal Engine. The reason being, is I am learning that when bringing in my motion capture data via Live Link, sometimes the data is not as perfect as it looks to the original animation.

Animation Curves

In order to “fix” this, I had to be able to see and identify the problem first. Hence, I started to look at the animation curves. I never looked at animation curves until I started to explore the backward solver control rig option inside of the Metahumans project.

I started working on a short video showing how I am editing, adding and adjusting the facial animation I recorded in the Metahumans project with Faceware Studio via Glassbox Live Client plugin. When you have an animation, you can now bake the animation to the control rig in Unreal. What does this mean?

It means that you can bake all of the animations to the control rig, and then make it additive. Since the animation curves are already baked onto the control rig, you can now either add certain expressions, by creating keyframes or remove them. I am adding them.

When you select a certain curve for something, say for instance the eyebrow, you can look at the x,y,z values in the timeline, and then select the curve option and see what the curves look like for that particular selection. If you see some strange results in that particular selection when playing out your animation, you can actually adjust the curve and smooth out the animation.

FBX

Another method I am testing out is using fbx files to import animations. Because Xsens exports the animation as skeletal data, with no mesh, you are unable to import it directly into Unreal. Instead, you can either “trick” Unreal by bringing that fbx file into say, Blender, and attach a mesh to it. Any mesh. I have tested this out by attaching a box to the root bone and then importing the data. Guess what? It works! And it does, because MVN allows you to choose to make the first frame a Tpose. The pose the data needs, in order to be retargeted it to.

However, Xsens was kind enough to send me their Xsens Skeleton mesh, and that removed the need to bring the data into another 3d software. Instead, I just imported the Skeleton Mesh into Unreal and then imported all of my other animations by assigning it to this skeleton mesh. Brilliant! Thank you, Xsens.

Take Recorder Live Link Source

I just learned why Take Recorder takes forever to process in 4.26 now when recording Live Link data. A way around this, is to only record the Live Link source instead of adding the Live Link Skeletal mesh. By doing it this way, and making sure to uncheck timecode in the take recorder settings, you can literally record all of the actual data from MVN into Unreal.

Then you bake the animation sequence that is recorded to the Live Link skeletal mesh, and viola! Not only does this eliminate the long processing times when recording, but the curves are cleaner!